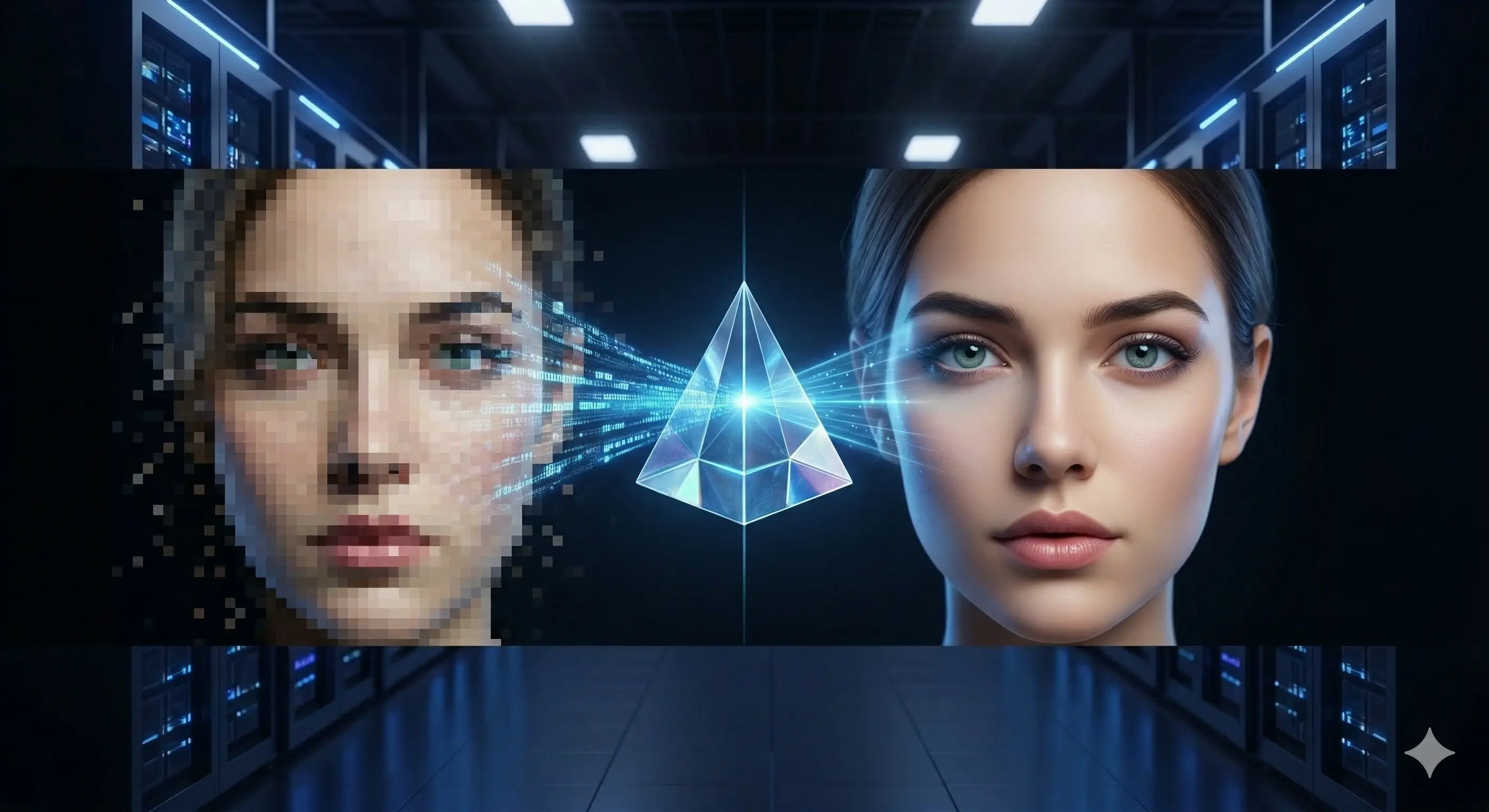

Transform Your Video Content

From pixelated archives to crystal-clear 4K — powered by distributed GPU computing.

GPUNet leverages cutting-edge AI models like Real-ESRGAN, RIFE, and DAIN to upscale, interpolate, and enhance video across distributed GPU clusters at unprecedented speed.

Every component of GPUNet has been engineered to maximise throughput and minimise latency across distributed GPU workloads.

Bidirectional gRPC streaming pushes task metadata to idle workers with sub-millisecond overhead. Binary data transfers over HTTP/3 (QUIC) for maximum throughput.

Real-ESRGAN and custom ONNX models transform SD content to 4K with stunning clarity. Supports 2x and 4x upscaling modes.

Generate intermediate frames using RIFE, DAIN, or SVP methods. Boost video frame rates for smoother, more cinematic playback.

Automatic detection of VRAM capacity, compute capability, and optimal batch sizes. Every worker runs at peak efficiency — zero configuration.

Raft-inspired cluster replication with leader election, log synchronisation, and vector clocks. No single point of failure.

Workers earn tokens for each processed frame with performance bonuses. Built-in incentive system for distributed contributor networks.

Eight algorithms working together. Each handles a different aspect of the problem — from fairness to prediction to market dynamics.

20% of jobs generate 80% of load. We identify them and route accordingly.

New workers start on probation. Build history, earn Elite status and 20% bonus tokens.

When tasks queue up, PMX crossover evolves the optimal assignment in milliseconds.

Long-running tasks get demoted. Short jobs stay responsive. No starvation.

Rate limits per worker, per job. Nobody monopolizes the cluster.

Urgent tasks jump the queue. Aging prevents indefinite waits.

Historical data builds confidence intervals. We know how long your job will take.

Workers bid for tasks. Market prices balance supply and demand automatically.

From pixelated archives to crystal-clear 4K — powered by distributed GPU computing.

A fully automated pipeline handles every stage of distributed video processing.

User uploads a video via HTTP/3 through the web portal, selects an upscaling model (e.g. Real-ESRGAN 4x), and optionally enables frame interpolation.

The master server uses FFmpeg to split the video into individual PNG frames. Each frame becomes an independent task, queued in Redis by priority.

The push-based distributor sends task metadata to idle workers via gRPC. Workers fetch frame data directly over HTTP/3 (QUIC) — least-loaded worker first.

Each worker runs the ONNX model on its GPU (CUDA, CoreML, or CPU fallback) with auto-tuned tile sizes and batch parameters. Post-processing filters are applied automatically.

Processed frames are uploaded back to the server via HTTP/3 for maximum throughput. The server tracks progress in real time and awards tokens to the contributing worker.

Once all frames are collected, FFmpeg merges them into the final video. The user receives a notification and can download the enhanced result.

A layered architecture separating user interfaces, orchestration, storage, and compute.

gRPC control plane + HTTP/3 data plane with hybrid task distribution

Measured with Real-ESRGAN 4x model, upscaling 1080p frames to 4K resolution.

| GPU | VRAM | Throughput | Time per Frame | |

|---|---|---|---|---|

| NVIDIA RTX 4090 | 0 GB | ~0 FPS | 0 ms | |

| NVIDIA RTX 4080 | 0 GB | ~0 FPS | 0 ms | |

| NVIDIA RTX 3090 | 0 GB | ~0 FPS | 0 ms | |

| NVIDIA RTX 4070 Ti | 0 GB | ~0 FPS | 0 ms | |

| Apple M2 Pro | 0 GB | ~0 FPS | 0 ms | |

| CPU (16 cores) | — | ~0 FPS | 0 ms |

GPUNet workers run natively across every major operating system and architecture.

Every connection is encrypted, every request is signed, every worker is verified.

Workers authenticate using elliptic-curve keypairs with challenge-response verification. No passwords, no shared secrets.

All connections use TLS 1.3 — gRPC over HTTP/2, data transfers over QUIC/HTTP3. Frame data in transit remains confidential across the network.

Every worker request is signed with a private key. The server verifies signatures before processing — tamper-proof communication.

Built-in rate limiting protects against abuse. Strict input validation on all endpoints prevents injection and overflow attacks.

GPUNet supports leading AI models for video enhancement, all running via optimised ONNX Runtime.

A modern Rust-native stack optimised for performance, safety, and reliability.